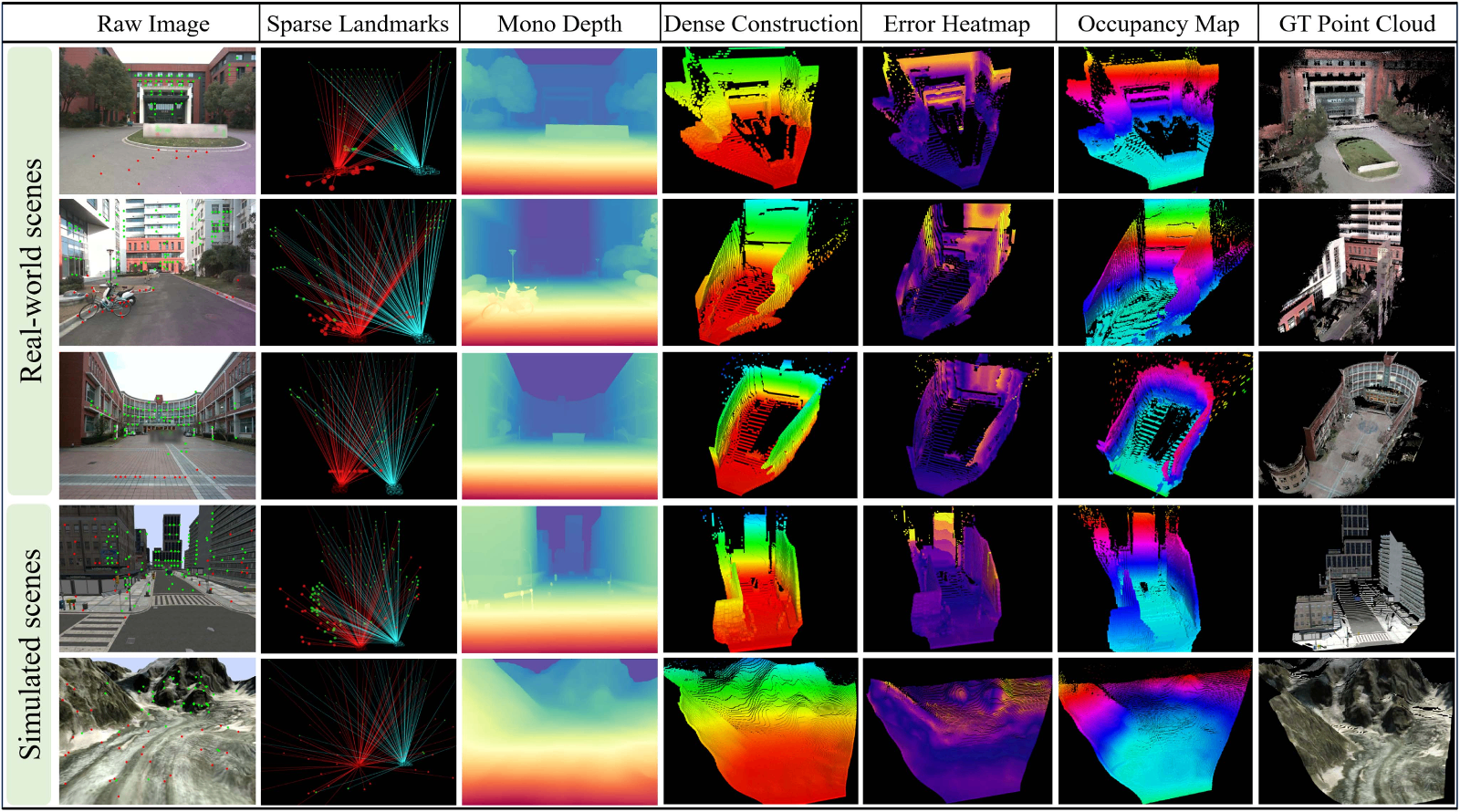

Recently, the team led by Prof. Wei Dong from Shanghai Jiao Tong University, in collaboration with Prof. Xingxing Zuo from Mohamed Bin Zayed University of Artificial Intelligence (MBZUAI), published a paper titled “Flying Co-Stereo: Enabling Long-Range Aerial Dense Mapping via Collaborative Stereo Vision of Dynamic-Baseline” in IEEE Transactions on Robotics. This work presents a flying collaborative stereo vision system in which two UAVs form a wide-baseline configuration to enable long-range dense 3D mapping. The proposed system achieves dense reconstruction at distances of up to 70 meters, with a relative error ranging from 2.3% to 9.7%.

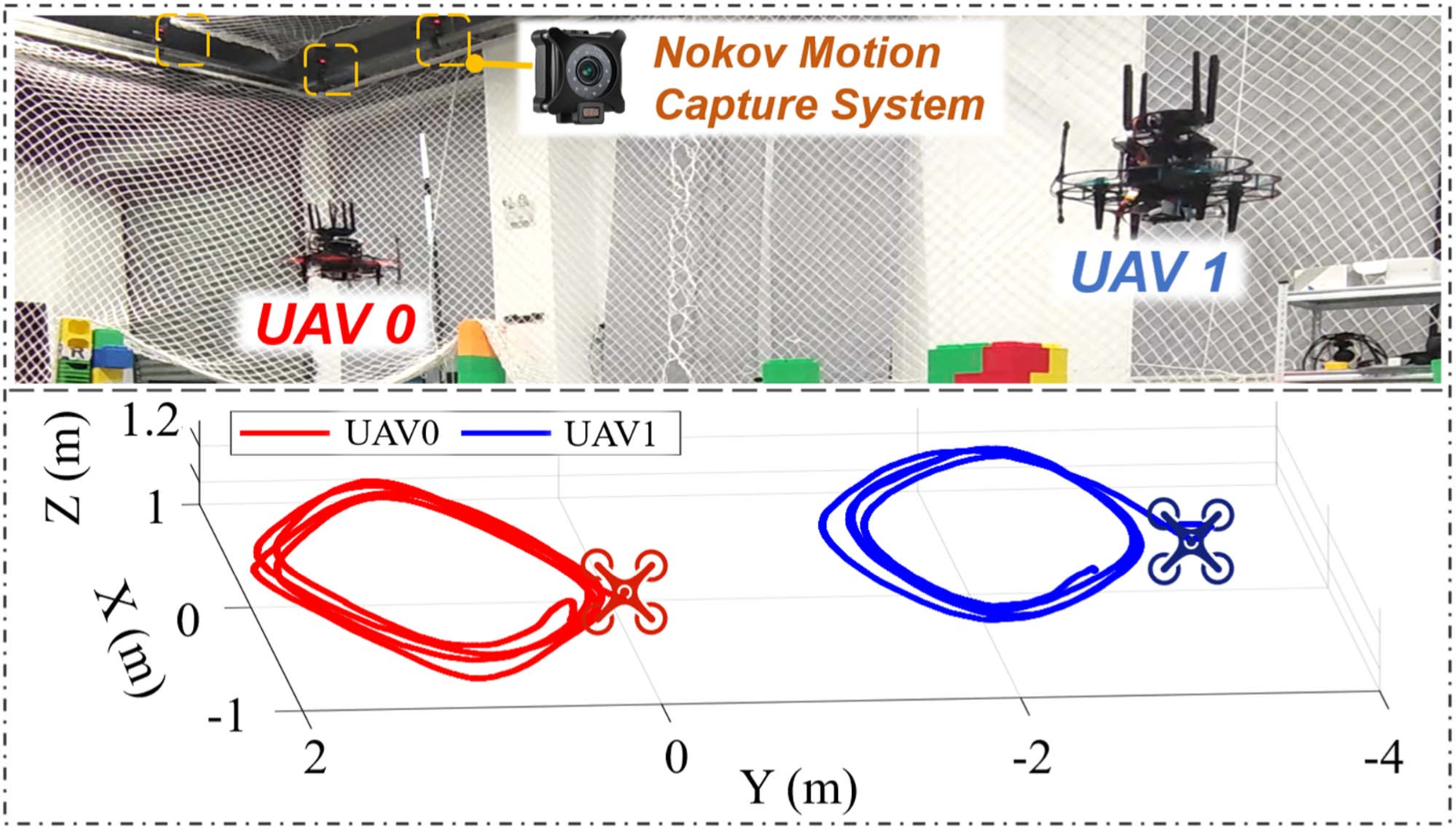

NOKOV motion capture system provides high-precision ground-truth pose data to validate the proposed relative pose estimation algorithm.

Background

For UAVs operating in large-scale unknown environments, long-range perception is essential for safe navigation. Compared with LiDAR systems, stereo cameras offer advantages in terms of cost-effectiveness and lightweight design. However, conventional stereo cameras are constrained by short fixed baselines, which typically limit their perception range to within 20 meters. Existing wide-baseline systems are often too large to be deployed on small UAV platforms. Meanwhile, distributing stereo cameras across two dynamically flying UAVs introduces additional challenges, including dynamically varying baselines and difficulties in cross-view feature association.

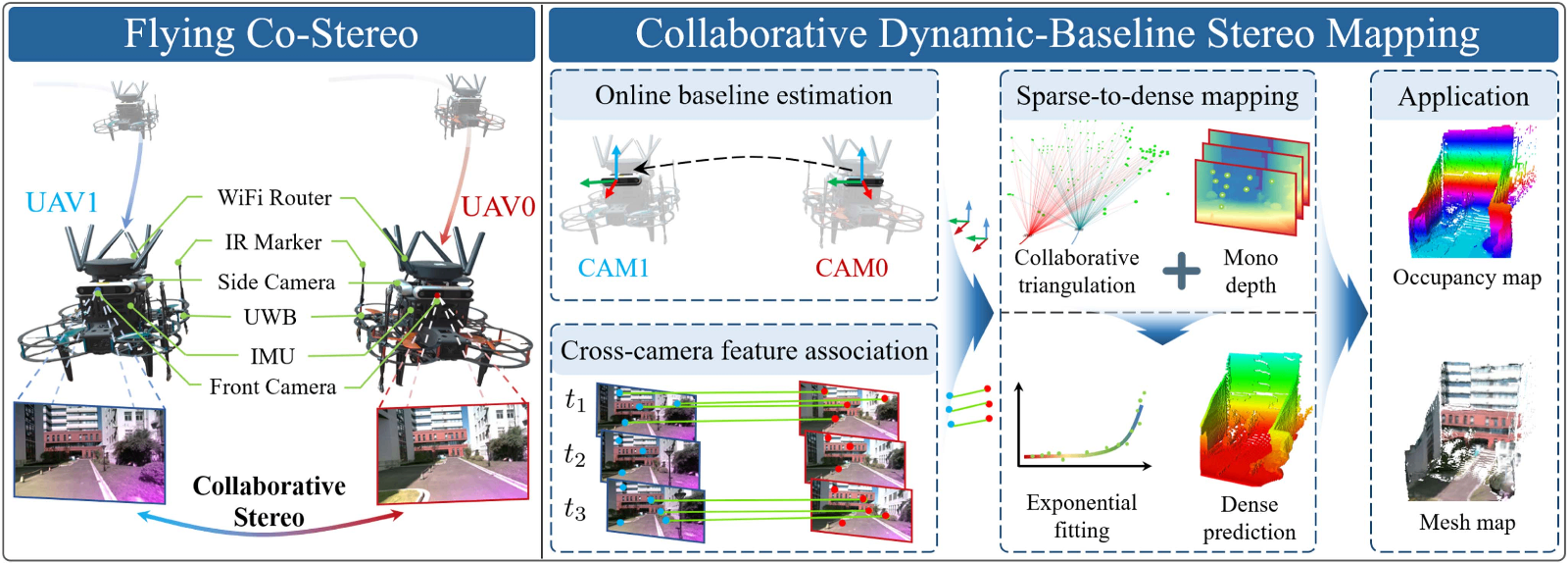

System architecture of Flying Co-Stereo within our proposed CDBSM framework

Contributions

1) A Flying Co-Stereo system is proposed, in which two collaborative UAVs form a wide-baseline, cross-agent stereo vision setup within a unified CDBSM framework, enabling long-range dense mapping in large-scale unknown environments.

2) A DS-VIRE is developed to achieve robust and accurate online estimation of the dynamic inter-UAV baseline in complex outdoor conditions.

3) A hybrid visual feature association strategy is designed, combining cross-agent deep matching with intra-agent feature tracking, to ensure real-time and persistent co-visible feature correspondences under varying viewpoints.

4) A sparse-to-dense depth recovery scheme is proposed, which refines dense monocular depth predictions using exponential fitting of long-range triangulated sparse landmarks for precise metric-scale mapping.

Experimental Validation

Experiments for relative pose estimation of Flying Co-Stereo under NOKOV motion capture system

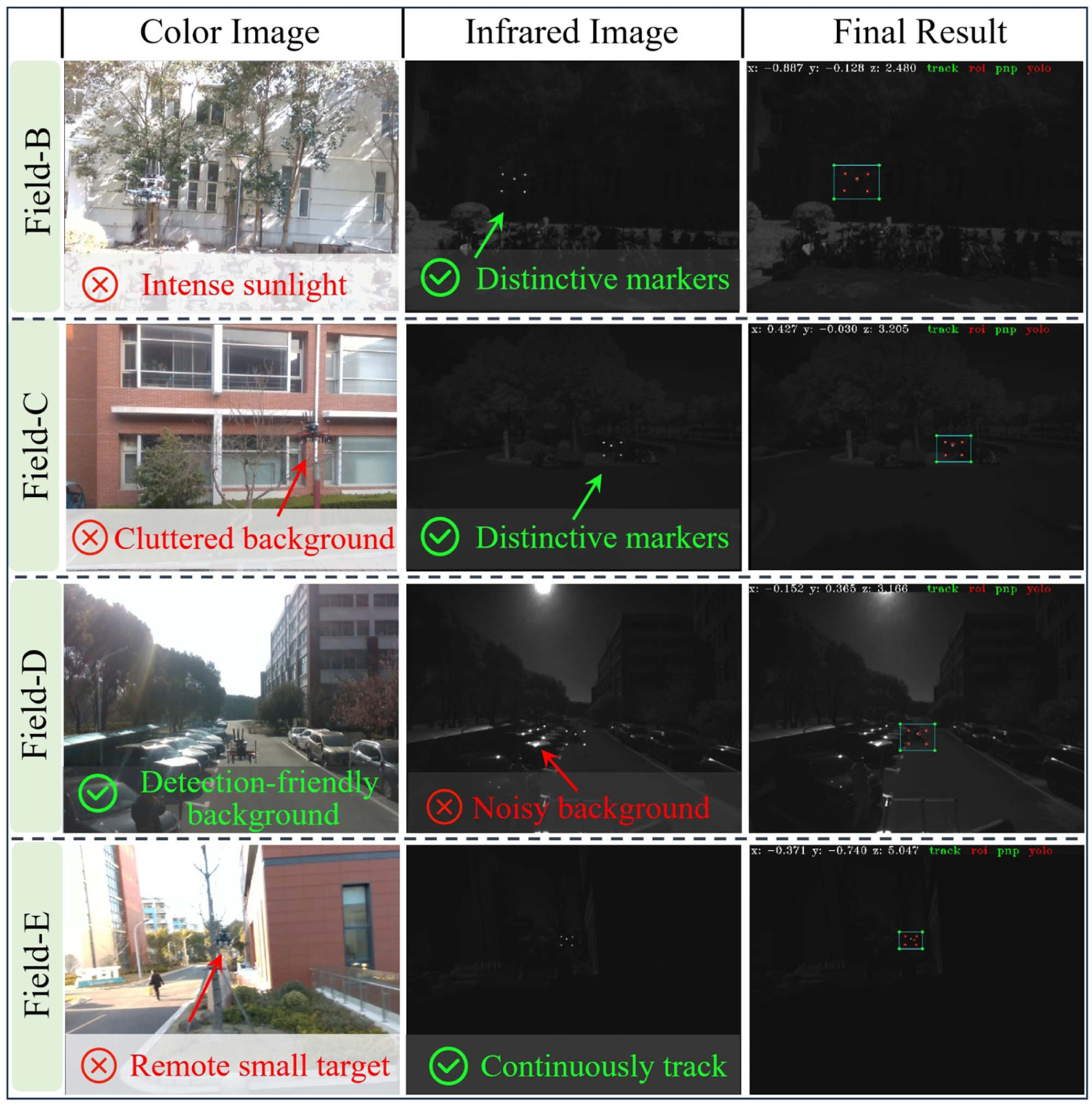

Experiments of DS-MVDT with challenges from intense sunlight, cluttered background, light noises, and remote observation.

Corresponding Authors

Wei Dong

Tenured associate professor at the School of Mechanical Engineering, Shanghai Jiao Tong University. His research focuses on multi-robot collaboration and active perception.

Xingxing Zuo

Tenured assistant professor in the Department of Robotics at Mohamed Bin Zayed University of Artificial Intelligence. His research interests include robotics, spatial intelligence, state estimation, and embodied intelligence.

At the upcoming ICRA 2026, Prof. Xingxing Zuo, together with international scholars, will organize the workshop titled “MM-SpatialAI: Multi-Modal Spatial AI for Robust Navigation and Open-World Understanding.”

NOKOV Motion Capture is a sponsor of this workshop. Researchers in related fields are welcome to participate and contribute to advancing multimodal spatial intelligence for robust navigation and open-world understanding.

The workshop homepage is https://xingxingzuo.github.io/MM-SpatialAI/.